AP demands clarity from tech companies on AI aimed at children

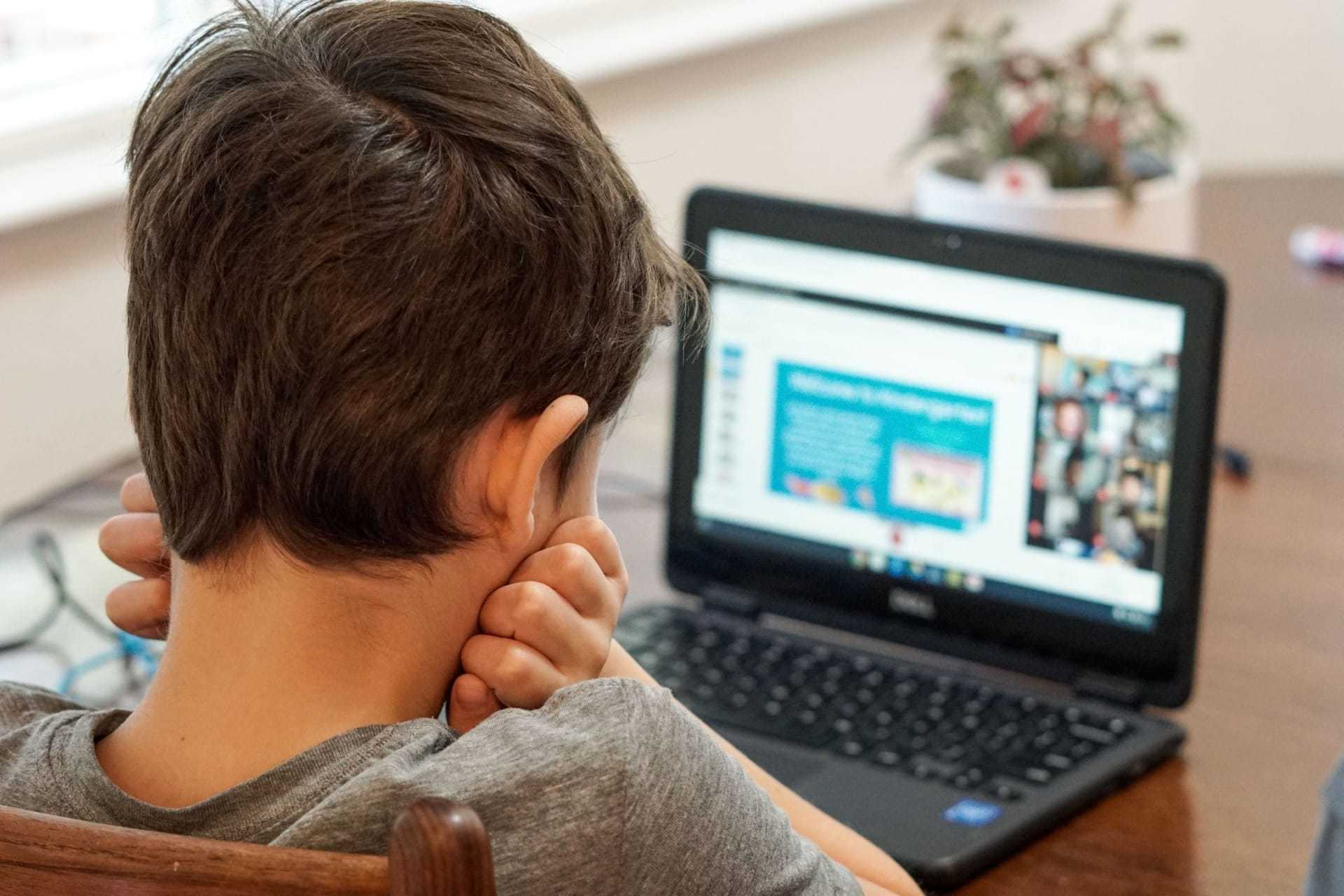

The Dutch Data Protection Authority (AP) is taking a keen interest in the operations of organizations that utilize generative artificial intelligence (AI), particularly those involved in creating apps for children. An increasing number of these apps are integrating AI chatbots, sparking concern over the handling and transparency of user data. The AP has taken proactive steps to ensure that these tech companies are transparent about their data use and whether they’ve established a carefully considered retention period for the collected data.

Generative AI applications, which include the AI chatbots under scrutiny, are gaining popularity due to their ability to generate text or images based on specific commands. These models are trained with extensive internet data, often amassed through scraping, with user queries in the tool serving as another potential data source. However, these questions can sometimes involve personal information, which makes it crucial for developers to adhere to privacy legislation.

In light of these developments, the AP has started a series of actions towards organizations that employ generative AI. This includes asking software developer OpenAI for clarity about its chatbot ChatGPT and its handling of personal data during system training. Additionally, the AP has become a member of the ChatGPT Task Force under the European Data Protection Board (EDPB), further solidifying its commitment to data privacy.

To guide companies in this rapidly evolving landscape, the AP is gearing up to provide guidance on scraping. With advancements in AI showing no signs of slowing down, it’s crucial that measures are put in place to protect users, especially children, from potential data misuse in this brave new world of technology.

Source: AP demands clarity from tech company about AI aimed at children | Dutch Data Protection Authority